Updated 21 April 2026 with new licensing details.

Most businesses we speak with aren’t choosing between using AI and not using it. Their staff are already using AI tools in some form — whether that be ChatGPT, Google Gemini, Claude, or something else. The question that actually matters is whether those tools are the right ones for a business environment, and whether they’re being used in a way that’s safe and appropriate.

This distinction is where Microsoft Copilot sits in a different category from most of the AI tools your team might be reaching for on their own. Understanding the difference helps you make a more informed decision about where to invest and how to structure AI use across your business.

What all these tools have in common

ChatGPT, Claude, Google Gemini, Microsoft Copilot, and similar tools are all built on large language models — AI trained on enormous amounts of text that can generate, summarise, translate, and reason through language-based tasks. At a basic level, they all do similar things: help you write faster, summarise information, answer questions, and work through complex problems.

For simple tasks — drafting a quick email, brainstorming ideas, explaining a concept in plain language — most of these tools perform well. The differences become significant when you move into business-critical work, when you need the AI to work with your actual company information, and when data security matters.

The fundamental difference: what data can the AI access?

This is the distinction that matters most for businesses, and it’s the one most often overlooked.

Consumer AI tools like ChatGPT and Google Gemini (in their standard free or subscription forms) are general-purpose assistants. They work from publicly available knowledge and whatever information you type or paste into them. They don’t know anything about your business unless you tell them. And when you do tell them — by pasting in a client email, an internal report, or a proposal you’re working on — that information is going into an external system that isn’t governed by your security policies.

This is a risk most businesses haven’t fully reckoned with. We regularly work with organisations where staff have been using ChatGPT or similar tools productively for months — and where nobody has stopped to ask what data is being pasted in. Confidential client information. Internal financial data. Sensitive HR content. Once it’s in an external AI tool, you’ve lost control of it in ways that can have serious consequences.

Microsoft 365 Copilot operates entirely within your Microsoft 365 environment. It can draw on your SharePoint files, your emails, your Teams conversations, and your documents — but all of that stays inside your Microsoft tenant, subject to your existing security policies and access controls. Nothing goes to an external system. Microsoft’s enterprise data protection commitments apply. This is what makes Copilot fundamentally different from general-purpose AI tools when it comes to business use.

How the tools compare on practical dimensions

Access to your business information

General AI tools: No. You have to manually provide context in every conversation, and providing it means sending data externally.

Microsoft 365 Copilot: Yes. It can draw on your documents, emails, Teams threads, and files — securely within your Microsoft environment.

Integration with your existing tools

General AI tools: Limited. You can copy content in and out, but there’s no native connection to Outlook, Word, Teams, or the other tools your team uses every day.

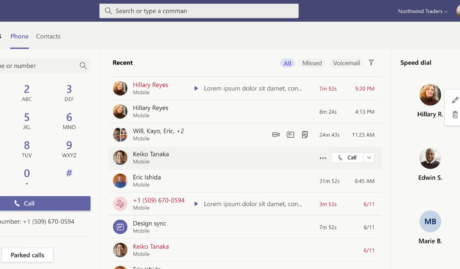

Microsoft 365 Copilot: Deep. Copilot is built directly into the apps your team already uses. In Outlook, it can summarise long email threads. In Teams, it can recap meetings you missed. In Word, it can draft from scratch or improve existing content. In Excel, it can analyse data and surface insights.

Data security and governance

General AI tools: Variable, and often inappropriate for business use. Consumer AI terms of service are not designed for organisational data. Enterprise tiers of some tools offer better protections, but require separate evaluation.

Microsoft 365 Copilot: Built on Microsoft’s enterprise security framework. Data stays within your Microsoft 365 tenant. Existing permissions and access controls apply. Compliant with Australian data residency requirements when configured correctly.

Setup and administration

General AI tools: Minimal. Most are consumer products that individuals sign up for and use independently. This is also part of the risk: there’s no central visibility into what your team is using them for.

Microsoft 365 Copilot: Requires proper deployment and configuration. This is not a plug-and-play product — it requires your Microsoft 365 environment to be in good shape, permissions to be configured appropriately, and adoption to be managed deliberately. This is additional effort, but it’s also what makes it appropriate for a business environment.

Cost

General AI tools: Free or low-cost consumer tiers are widely available. Enterprise tiers with better security controls cost more.

Microsoft 365 Copilot: AU$26.91 per user per month (excluding GST, current promotional pricing) as an add-on to a qualifying Microsoft 365 subscription. This is a meaningful investment, and the return depends on proper implementation.

So which one is right for your business?

For individual, low-stakes tasks where no company information is involved, general AI tools are useful and many of your staff are probably already using them. That’s not necessarily a problem.

For any work involving your company’s own information — client data, internal documents, financial information, or anything that could be sensitive — Microsoft 365 Copilot is the appropriate choice. It’s the tool that was designed for a business environment, with the security architecture to match.

The honest recommendation for most Brisbane SMEs: start by getting clear on what your staff are already doing with AI tools. That conversation often reveals both the risk exposure and the productivity opportunity more clearly than any comparison article can. Then assess whether your Microsoft 365 environment is ready for Copilot, because the quality of deployment determines the quality of results.

We’ve seen businesses get meaningful value from Copilot and we’ve seen businesses find it underwhelming — and the difference is almost always in how it was set up and adopted, not in the underlying technology. If you’re considering it, a readiness assessment is the sensible first step.

Grassroots IT is a Brisbane-based managed IT services provider specialising in Microsoft solutions for SMEs. Learn more about Microsoft Copilot implementation at grassrootsit.com.au/microsoft-copilot/